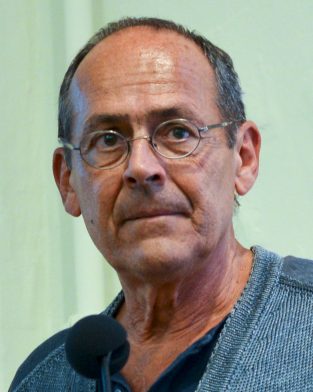

Perfect Memory's Scientific Committee

In his tribute to Bernard Stiegler, who died in the summer of 2020, Steny Solitude evokes the theoretical foundations of Perfect Memory.

« Bernard Stiegler founded the « Engineering of Cultural Industries » course at the Compiègne University of Technology (UTC). I had the luck and pleasure of being the first class of this original course, which mixed humanities and sciences in equal parts. I also had the honor of joining the small team he had set up to reflect on the challenges raised by the acculturation of the great number of people to the World Wide Web and audiovisual writing: the « Digital Territories » project.

He argued: « The moving image has a specificity that the text does not have, everyone can read a video without knowing the grammar of its writing. This leaves scope for all manipulations and excesses. This must be changed ». Many boundaries were pushed back on this occasion: the first navigable and interactive video in a browser (4 years before Flash, Macromedia, Adobe, etc.), the first semantic camera, and the first project to exploit the models of co-construction, economy of contribution, via the appropriation of new and collaborative tools by the public.

« The moving image has a specificity that the text does not have, everyone can read a video without knowing the grammar of its writing. This leaves scope for all manipulations and excesses. This must be changed »

This changed the course of my life. I had gone to the UTC to work on the systemic mechanics of knowledge transmission and I discovered epiphilogenesis, memory retentions, temporal objects, Husserl, Adorno, Leroi-Gourhan, Bernard Stiegler and a whole intellectual world… The foundation of Perfect Memory is the very embodiment of many concepts carried by the school of thought he founded at the UTC, which was perpetuated by Bruno Bachimont.”

Between 2013 and 2017, a new approach to Perfect Memory is being built through Lénaïk Leyoudec’s doctoral thesis, which focuses on editorialising digital inherited documents. Directed by Bruno Bachimont, then director of research at the UTC, this research stimulates the link between the startup and the engineering school.

In 2020, as Perfect Memory experiences an accelerating growth, the need is felt within the Perfect management team to reconnect with fundamental research. « Understand to do and do in order to understand », one of the UTC’s watchwords, is echoed in the reality of Perfect Memory, whose team trains itself to think in a « meta » way while building the platform.

The idea of a scientific committee appears, whose function would be to question the technological artefact constructed by Perfect Memory, using the theories constructed in both human and social sciences and the engineering sciences. This committee, made up of university researchers and headed by Bruno Bachimont, would provide moments of interaction with Perfect, bring theoretical insights and then, in a third phase, share with the scientific community the results of the scientific knowledge extracted from the « Perfect Memory » field.

As an entry point to the themes addressed by the scientific committee, let us look at the « data intelligibility » and « operational calculability » pairs introduced by Bruno Bachimont to La Perfect in October 2020:

The massification of the data and the complexity of the algorithms lead to:

- Results whose relevance is difficult to validate. Either they conform to a model but are sometimes far from real data (since the model makes choices to formalize / standardize knowledge), either they conform to real data, but with intermediate results that are sometimes uninterpretable.

- Difficult to consider prior knowledge: Knowledge modelling; Taking these models into account in the elaboration and interpretation of results.

There are two approaches in which these problems arise:

- Structured data : ensuring that semantics and syntax are consistent; guaranteed interpretability and explicability but low robustness and difficult modelling.

- Learned data : robustness of processing but without interpretability and explicability of results. Prior knowledge is not considered.

The problem may therefore look like this:

« How to have relevant calculations on numerous or massive data and produce interpretable results ? »

Steny Solitude – CEO Perfect Memory

Image par Berkeley Center for New Media — 2016 HTNM Bernard Stiegler, CC BY-SA 2.0